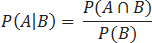

You’re sitting in the lobby of a hostel in the middle of Scotland, and a new batch of guests walks through the door. You generally dislike talking to people (let’s say you don’t like interacting with 90% of the population), but a cute girl walks in and is listening to a Crystal Castles album (a band—let’s say around 5% of people like them). From personal experience, you know that if someone likes Crystal Castles, you’re a bit more likely to like that person (let’s be generous and say double the normal, so 20%). With just these observations, you’re able to determine how likely it is that you’ll like interacting with this person. From last week, we know the definition of a conditional probability, that is: The probability of event A given event B is true is equal to the following:

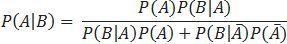

If we define A as “liking social interaction with a person” and event B as “that person likes Crystal Castles,” the information in the paragraph above provides us with P(A), P(B), and P(B|A). If we look at the conditional probability of event B given event A, we can see that the probability of A∩B is equal to P(A) × P(B|A) and conclude that:

This is known as Bayes’ theorem. We can therefore conclude that the chance of you liking social interaction with a person who likes Crystal Castles is .1 × .2 ÷ .05 = .4. With just one observation about a person, we have changed the probability of us liking a social interaction with that person from .1 to .4. Using an observation to modify the probability of an event (in this case, the observation is “that person likes Crystal Castles,” and the event is “you will like social interaction with that person”) will be Case 1 of how to practically use Bayes’ Theorem.

Case 2: Using the Results of an Experiment to Make a Better Prediction

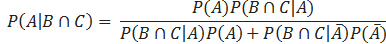

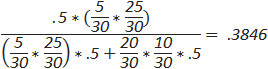

Let’s say we have two boxes in front of us—one has ten vials of delicious orange juice (it’s delicious because otherwise, it wouldn’t be worth the danger) and twenty vials of a deadly poison, while the other has twenty-five vials of orange juice and five vials of poison. We want to guess which box contains less poison, and our initial probability (also known as the prior probability) of selecting correctly is .5. Let’s say that we have a lab assistant (for anyone who knows him, assume we use Josh Jacobson to perform this experiment) try a vial from a box selected at random; how can we use the results of this experiment to better make a guess of which box is which? Let’s assume the result of our experiment is that Josh drinks a vial and breathes a sigh of relief shortly before beginning to vomit up massive amounts of blood as he writhes in agony on the floor; we can then say event A is “the box contains five vials of poison,” and event B (our experiment) is “Josh dies”. P(A) is our previously mentioned prior probability and is .5, and P(B) is the probability Josh dies, which can be expressed as:

Where  is the probability of not selecting the box with five vials of poison. Therefore, we can use our above expression for Bayes’ Theorem to find the probability that the box Josh took a deadly vial from is the box with five vials of poison.

is the probability of not selecting the box with five vials of poison. Therefore, we can use our above expression for Bayes’ Theorem to find the probability that the box Josh took a deadly vial from is the box with five vials of poison.

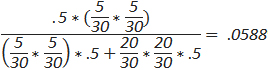

Which is equal to:

From one experiment, we have been able to shift the odds of a correct guess from 1 in 2 to 4 in 5. The ability to make observations or conduct experiments is, at times, an extremely powerful way to maximize the likelihood (another technical math term that the reader could investigate on his or her own) of making the correct decision.

Case 3: Updating Based on Further Observations or Experiments

We can (obviously) incorporate more than one observation/experiment into our Bayesian considerations. Let’s say we perform the experiment described in Case 2 again (let’s also say we replace the vial of poison to make the calculations here more easy on the eyes as well) with a new hypothetical volunteer. The volunteer will consume a vial from the same box as the one Josh took from, so let’s consider event C as the volunteer dies and event D as the volunteer has a wonderful glass of orange juice and takes a nice, relaxing walk around town afterward. Therefore, the results of our experiment will be B∩C or B∩D, and we get:

For C:

For D:

While the first thing you might think is that the second experiment (for the case of event D) makes us less likely to predict the correct box, this is an incorrect way of looking at things, and we should always consider all data available, as the end goal is to select the box that most likely contains only five vials of poison; the only way to do that is to select the other box when the probability of that box containing five vials is less than .5.

From the examples above, we can learn some important principles about Bayes’ Theorem:

- We can use objective (as we did in the case of the poison) or subjective (such as those used in the social interaction case) probabilities in our calculation. Granted, using subjective probabilities adds in further chance of error, but if you’re confident in your estimation abilities, it grants you a powerful tool.

- Even small or apparently minor observations can be useful. In Case 1, the fact that a person liked a particular band increased our probability of success by 300%.

- The more unlikely the occurrence, the more powerful it is. This is also demonstrated by Case 1, as one of the main reasons the observation had such a powerful effect was that P(B) was fairly small. This is because if you seek to distinguish an individual from a population, the more unique the trait, the further from the population the individual will be.

- Multiple experiments may make decisions “less clear” on paper but increase our certainty in the result.

Using Bayes’ Theorem in Magic: The Gathering

Figuring out Your Opponent’s Deck

One of the most obvious ways to use Bayes’ Theorem in Magic is to figure out what archetype your opponent is playing. Let’s assume a metagame of 33% Delver, 33% W/U control, and 33% mono-red. If our opponent plays a turn-one Glacial Fortress (assuming Delver and W/U control both always play four), we can know that P(Delver) = P(W/U control) = .5, and P(mono-red) = 0. Another generally useful observation is that players with a plethora of different dice are usually newer and less experienced and tend toward more aggressive strategies.

Calling Bluffs and Figuring out Your Opponents Hand

The most important use of Bayes’ Theorem in the content of a game of Magic is deducing your opponent’s hand and calling bluffs. As the purpose of this article (as well as last week’s) is to teach the math behind the game, we’ll only use one example here (but Part 3 of this series will be totally comprised of examples using the material from this week and last).

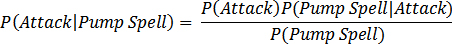

Your opponent has four cards in hand and controls a 2/2 while you’re tapped out and have a 3/3. He attacks with his 2/2, and you want to know the probability of a successful block; the form of Bayes’ Theorem here would be:

This is a combination of both objective probabilities [P(Pump Spell)] and subjective ones. We first must figure out the probability that our opponent attacks (which will vary wildly from opponent to opponent, so it’s important to have good reads) and the probability that our opponent is not bluffing. We combine this with what we learned last week to find the probability he has a pump spell, which will be (assuming he has one pump spell in his deck):

Even after finding the probability of him bluffing, it’s still necessary to decide on a threshold of when to block, which varies greatly from player to player and scenario to scenario and is far outside what will be covered in this article series. Come back next week for a turn-by-turn walkthrough of all the math applicable to a single game of Magic.

Chris Mascioli

@dieplstks on Twitter